If you have children, then you probably remember them learning to walk, and then to read. If you’re like me, you were probably amazed by how long it took to do things that we don’t even think about. Things that we take for granted in our day-to-day lives, and which seem so obvious to us, take a long time to master.

If you have children, then you probably remember them learning to walk, and then to read. If you’re like me, you were probably amazed by how long it took to do things that we don’t even think about. Things that we take for granted in our day-to-day lives, and which seem so obvious to us, take a long time to master.

You can often see and experience this when you compare how you learn a language as a native speaker, from how you learn as a second language. I grew up speaking English, and never learned all sorts of rules that my non-native-speaking friends learned in school. Similarly, I learned all sorts of rules for Hebrew grammar that my children never learned in school.

It’s thus super easy to take things for granted when you’re an expert. Indeed, that’s almost the definition of an expert — someone who understands a subject so well, that for them things are obvious.

To many developers, and especially Python developers, it’s obvious not only that there are different types of parentheses in Python, but that each type has multiple uses, and do completely different things. But to newcomers, it’s far from obvious when to use round parentheses, square brackets, and/or curly braces.

I’ve thus tried to summarize each of these types of parentheses, when we use them, and where you might get a surprise as a result. If you’re new to Python, then I hope that this will help to give you a clearer picture of what is used when.

I should also note that the large number of parentheses that we use in Python means that using an editor that colorizes both matching and mismatched parentheses can really help. On no small number of occasions, I’ve been able to find bugs quickly thanks to the paren-coloring system in Emacs.

Regular parentheses — ()

Callables (functions and classes)

Perhaps the most obvious use for parentheses in Python is for calling functions and creating new objects. For example:

x = len('abcd')

i = int('12345')

It’s worth considering what happens if you don’t use parentheses. For example, I see the following code all the time in my courses:

d = {'a':1, 'b':2, 'c':3}

for key, value in d.items:

print(f"{key}: {value}")

When you try to run this code, you can an error message that is true, but whose meaning isn’t completely obvious:

TypeError: 'builtin_function_or_method' object is not iterable

Huh? What the heck does this mean?

It’s worth remembering how “for” loops work in Python:

- “for” turns to the object at the end of the line, and asks whether it’s iterable

- if so, then “for” asks the object for its next value

- whenever the object says, “no more!” the loop stops

In this case, “for” turns to the method “d.items” and asks if it’s iterable. Note that we’re not asking whether the output from “d.items” is iterable, but rather whether the method itself is iterable.

That’s because there’s a world of difference between “d.items” and “d.items()”. The first returns the method. The second returns an iterable sequence of name-value pairs from the dictionary “d”.

The solution to this non-working code is thus to add parentheses:

d = {'a':1, 'b':2, 'c':3}

for key, value in d.items():

print(f"{key}: {value}")

Once we do that, we get the desired result.

I should note that we also need to be careful in the other direction: Sometimes, we want to pass a function as an argument, and not execute it. One example is when we’re in the Jupyter notebook (or other interactive Python environment) and ask for help on a function or method:

help(len)

help(str.upper)

In both of the above cases, we don’t want to get help on the output of those functions; rather, we want to get help on the functions themselves.

Prioritizing operations

In elementary school, you probably learned the basic order of arithmetic operations — that first we multiply and divide, and only after do we add and subtract.

Python clearly went to elementary school as well, because it follows this order. For example:

In [1]: 2 + 3 * 4

Out[1]: 14

We can change the priority by using round parentheses:

In [2]: (2 + 3) * 4

Out[2]: 20

Experienced developers often forget that we can use parentheses in this way, as well — but this is, in many ways, the most obvious and natural way for them to be used by new developers.

Creating tuples

Of course, we can also use () to create tuples. For example:

In [8]: t = (10,20,30)

In [9]: type(t)

Out[9]: tuple

What many beginning Python developers don’t know is that you actually don’t need the parentheses to create the tuple:

In [6]: t = 10,20,30

In [7]: type(t)

Out[7]: tuple

Which means that when you return multiple values from a function, you’re actually returning a tuple:

In [3]: def foo():

...: return 10, 20, 30

...:

...:

In [4]: x = foo()

In [5]: x

Out[5]: (10, 20, 30)

What surprises many newcomers to Python is the following:

In [10]: t = (10)

In [11]: type(t)

Out[11]: int

“Wait,” they say, “I used parentheses. Shouldn’t t be a tuple?”

No, t is an integer. When Python’s parser sees something like “t = (10)”, it can’t know that we’re talking about a tuple. Otherwise, it would also have to parse “t = (8+2)” as a tuple, which we clearly don’t want to happen, assuming that we want to use parentheses for prioritizing operations (see above). And so, if you want to define a one-element tuple, you must use a comma:

In [12]: t = (10,)

In [13]: type(t)

Out[13]: tuple

Generator expressions

Finally, we can use round parentheses to create “generators,” using what are known as “generator expressions.” These are a somewhat advanced topic, requiring knowledge of both comprehensions and iterators. But they’re a really useful tool, allowing us to describe a sequence of data without actually creating each element of that sequence until it’s needed.

For example, if I say:

In [17]: g = (one_number * one_number

...: for one_number in range(10))

The above code defines “g” to be a generator, the result of executing our generator expression. “g” is than an iterable, an object that can be placed inside of a “for” loop or a similar context. The fact that it’s a generator means that we can have a potentially infinite sequence of data without actually needing to install an infinite amount of RAM on our computers; so long as we can retrieve items from our iterable one at a time, we’re set.

The above generator “g” doesn’t actually return 10 numbers. Rather, it returns one number at a time. We can retrieve them all at once by wrapping it in a call to “list”:

In [18]: list(g)

Out[18]: [0, 1, 4, 9, 16, 25, 36, 49, 64, 81]

But the whole point of a generator is that you don’t want to do that. Rather, you will get each element, one at a time, and thus reduce memory use.

Something funny happens with round parentheses when they’re used on a generator expression in a function call. Let’s say I want to get a string containing the elements of a list of integers:

In [19]: mylist = [10, 20, 30]

In [20]: '*'.join(mylist)

This fails, because the elements of “mylist” are integers. We can use a generator expression to turn each integer into a string:

In [21]: '*'.join((str(x)

...: for x in mylist))

Out[21]: '10*20*30'

Notice the double parentheses here; the outer ones are for the call to str.join, and the inner ones are for the generator expression. Well, it turns out that we can remove the inner set:

In [22]: '*'.join(str(x)

...: for x in mylist)

Out[22]: '10*20*30'

So the next time you see a call to a function, and a comprehension-looking thing inside of the parentheses, you’ll know that it’s a generator expression, rather than an error.

Cheating Python’s indentation rules

Python is famous for its use of indentation to mark off blocks of code, rather than curly braces, begin/end, or the like. In my experience, using indentation has numerous advantages, but tends to shock people who are new to the language, and who are somewhat offended that the language would dictate how and when to indent code.

There are, however, a few ways to cheat (at least a little) when it comes to these indentation rules. For example, let’s say I have a dictionary representing a person, and I want to know if the letter ‘e’ is in any of the values. I can do something like this:

In [39]: person = {'first':'Reuven', 'last':'Lerner', 'email':'reuven@lerner.co.il'}

In [40]: if 'e' in person['first'] or 'e' in person['last'] or 'e' in person['email']:

...: print("Found it!")

...:

Found it!

That “if” line works, but it’s far too long to be reasonably readable. What I’d love to do is this:

In [40]: if 'e' in person['first'] or

'e' in person['last'] or

'e' in person['email']:

...: print("Found it!")

The problem is that the above code won’t work; Python will get to the end of the first “or” and complain that it reached the end of the line (EOL) without a complete statement.

The solution is to use parentheses. That’s because once you’ve opened parentheses, Python is much more forgiving and flexible regarding indentation. For example, I can write:

In [41]: if ('e' in person['first'] or

...: 'e' in person['last'] or

...: 'e' in person['email']):

...: print("Found it!")

...:

...:

Found it!

Our code is now (in my mind) far more readable, thanks to the otherwise useless parentheses that I’ve added.

By the way, this is true for all parentheses. So if I want to define my dict on more than one line, I can say:

In [42]: person = {'first':'Reuven',

...: 'last':'Lerner',

...: 'email':'reuven@lerner.co.il'}

Python sees the opening { and is forgiving until it finds the matching }. In the same way, we can open a list comprehension on one line and close it on another. For years, I’ve written my list comprehensions on more than one line, in the belief that they’re easier to read, write, and understand. For example:

[one_number * one_number

for one_number in range(10)]

Square brackets — []

Creating lists

We can create lists with square brackets, as follows:

mylist = [ ] # empty list

mylist = [10, 20, 30] # list with three items

Note that according to PEP 8, you should write an empty list as [], without any space between the brackets. I’ve found that with certain fonts, the two brackets end up looking like a square, and are hard for people in my courses to read and understand. So I always put a space between the brackets when creating an empty list.

(And yes, I’m that rebellious in real life, not just when programming.)

We can use square brackets not just to create lists with explicitly named elements, but also to create lists via list comprehensions:

In [16]: [one_number * one_number

...: for one_number in range(10)]

...:

Out[16]: [0, 1, 4, 9, 16, 25, 36, 49, 64, 81]

The square brackets tell Python that this is a list comprehension, producing a list. If you use curly braces, you’ll get either a set or a dict back, and if you use regular parentheses, you’ll get a generator expression (see above).

Requesting individual items

Many people are surprised to discover that in Python, we always use square brackets to retrieve from a sequence or dictionary:

In [23]: mylist = [10, 20,30]

In [24]: t = (10, 20, 30)

In [25]: d = {'a':1, 'b':2, 'c':3}

In [26]: mylist[0]

Out[26]: 10

In [27]: t[1]

Out[27]: 20

In [28]: d['c']

Out[28]: 3

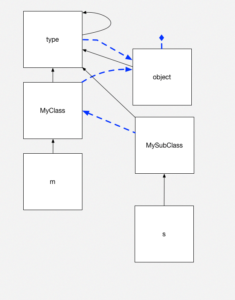

Why don’t we use regular parentheses with tuples and curly braces with dictionaries, when we want to retrieve an element? The simple answer is that square brackets, when used in this way, invoke a method — the __getitem__ method.

That’s right, there’s no difference between these two lines of code:

In [29]: d['c']

Out[29]: 3

In [30]: d.__getitem__('c')

Out[30]: 3

This means that if you define a new class, and you want instances of this class to be able to use square brackets, you just need to define __getitem__. For example:

In [31]: class Foo(object):

...: def __init__(self, x):

...: self.x = x

...: def __getitem__(self, index):

...: return self.x[index]

...:

In [32]: f = Foo('abcd')

In [33]: f[2]

Out[33]: 'c'

See? When we say f[2], that’s translated into f.__getitem__(2), which then returns “self.x[index]”.

The fact that square brackets are so generalized in this way means that Python can take advantage of them, even on user-created objects.

Requesting slices

You might also be familiar with slices. Slices are similar to individual indexes, except that they describe a range of indexes. For example:

In [46]: import string

In [47]: string.ascii_lowercase[10:20]

Out[47]: 'klmnopqrst'

In [48]: string.ascii_lowercase[10:20:3]

Out[48]: 'knqt'

As you can see, slices are either of the form [start:end+1] or [start:end+1:stepsize]. (If you don’t specify the stepsize, then it defaults to 1.)

Here’s a little tidbit that took me a long time to discover: You can get an IndexError exception if you ask for a single index beyond the boundaries of a sequence. But slices don’t have in such problems; they’ll just stop at the start or end of your string:

In [50]: string.ascii_lowercase[500]

---------------------------------------------------------------------------

IndexError Traceback (most recent call last)

<ipython-input-50-fad7a1a4ec3e> in <module>()

----> 1 string.ascii_lowercase[500]

IndexError: string index out of range

In [51]: string.ascii_lowercase[:500]

Out[51]: 'abcdefghijklmnopqrstuvwxyz'

How do the square brackets distinguish between an individual index and a slice? The answer: They don’t. In both cases, the __getitem__ method is being invoked. It’s up to __getitem__ to check to see what kind of value it got for the “index” parameter.

But wait: If we pass an integer or string (or even a tuple) to square brackets, we know what type will be passed along. What type is passed to our method if we use a slice?

In [55]: class Foo(object):

...: def __getitem__(self, index):

...: print(f"index = {index}, type(index) = {type(index)}")

...:

In [56]: f = Foo()

In [57]: f[100]

index = 100, type(index) = <class 'int'>

In [58]: f[5:100]

index = slice(5, 100, None), type(index) = <class 'slice'>

In [59]: f[5:100:3]

index = slice(5, 100, 3), type(index) = <class 'slice'>

Notice that in the first case, as expected, we get an integer. But in the second and third cases, we get a slice object. We can create these manually, if we want; “slice” is in the “bulitin” namespace, along with str, int, dict, and other favorites. And as you can see from its printed representation, we can call “slice” much as we do “range”, with start, stop, and step-size arguments. I haven’t often needed or wanted to create slice objects, but you certainly could:

In [60]: s = slice(5,20,4)

In [61]: string.ascii_lowercase[s]

Out[61]: 'fjnr'

In [62]: string.ascii_uppercase[s]

Out[62]: 'FJNR'

In [63]: string.ascii_letters[s]

Out[63]: 'fjnr'

In [64]: string.punctuation[s]

Out[64]: '&*.<'

Curly braces — {}

Creating dicts

The classic way to create dictionaries (dicts) in Python is with curly braces. You can create an empty dict with an empty pair of curly braces:

In [65]: d = {}

In [66]: len(d)

Out[66]: 0

Or you can pre-populate a dict with some key-value pairs:

In [67]: d = {‘a’:1, ‘b’:2, ‘c’:3}

In [68]: len(d)

Out[68]: 3

You can, of course, create dicts in a few other ways. In particular, you can use the “dict” class to create a dictionary based on a sequence of two-element sequences:

In [69]: dict(['ab', 'cd', 'ef'])

Out[69]: {'a': 'b', 'c': 'd', 'e': 'f'}

In [70]: d = dict([('a', 1), ('b', 2), ('c', 3)])

In [71]: d

Out[71]: {'a': 1, 'b': 2, 'c': 3}

In [72]: d = dict(['ab', 'cd', 'ef'])

In [73]: d

Out[73]: {'a': 'b', 'c': 'd', 'e': 'f'}

But unless you need to create a dict programmatically, I’d say that {} is the best and clearest way to go. I remember reading someone’s blog post a few years ago (which I cannot find right now) in which it was found that {} is faster than calling “dict” — which makes sense, since {} is part of Python’s syntax, and doesn’t require a function call.

Of course, {} can also be used to create a dictionary via a dict comprehension:

In [74]: { one_number : one_number*one_number

...: for one_number in range(10) }

...:

Out[74]: {0: 0, 1: 1, 2: 4, 3: 9, 4: 16, 5: 25, 6: 36, 7: 49, 8: 64, 9: 81}

In the above code, we create a dict of the number 0-9 (keys) and their values to the second power (values).

Remember that a dict comprehension creates one dictionary, rather than a list containing many dictionaries.

Create sets

I’ve become quite the fan of Python’s sets. You can think of sets in a technical sense, namely that they are mutable, and contain unique, hashable values. As of Python 3.6, they are stored in insertion order.

But really, it’s just easiest to think of sets as dictionaries without any values. (Yes, this means that sets are nothing more than immoral dictionaries.) Whatever applies to dict keys also applies to the elements of a set.

We can create a set with curly braces:

In [75]: s = {10,20,30}

In [76]: type(s)

Out[76]: set

As you can see, the fact that there is no colon (:) between the name-value pairs allows Python to parse this code correctly, defining ‘s” to be a set, rather than a dict.

Nearly every time I teach about sets, someone tries to create an empty set and add to it, using set.add:

In [77]: s = {}

In [78]: s.add(10)

—————————————————————————

AttributeError Traceback (most recent call last)

<ipython-input-78-721f80ddfefc> in <module>()

—-> 1 s.add(10)

AttributeError: ‘dict’ object has no attribute ‘add’

The error indicates that “s” is a dict, and that dicts lack the “add” method. Which is fine, but didn’t I define “s” to be a set?

Not really: Dicts came first, and thus {} is an empty dict, not an empty set. If you want to create an empty set, you’ll need to use the “set” class:

s = set()

s.add(10)

This works just fine, but is a bit confusing to people starting off in Python.

I often use sets to remove duplicate entries from a list. I can do this with the “set” class (callable), but I can also use the “* argument” syntax when calling a function:

In [79]: mylist = [10, 20, 30, 10, 20, 30, 40]

In [80]: s = {*mylist}

In [81]: s

Out[81]: {10, 20, 30, 40}

Note that there’s a bit difference between {*mylist} (which creates a set from the elements of mylist) and {mylist} which will try to create a set with one element, the list “mylist”, and will fail because lists are unhashable.

Just as we have list comprehensions and dict comprehensions, we also have set comprehensions, which means that we can also say:

In [84]: mylist = [10, 20, 30, 10, 20, 30, 40]

In [85]: {one_number

...: for one_number in mylist}

...:

Out[85]: {10, 20, 30, 40}

str.format

Another place where we can use curly braces is in string formatting. Whereas Python developers used to use the printf-style “%” operator to create new strings, the modern way to do so (until f-strings, see below) was the str.format method. It worked like this:

In [86]: name = ‘Reuven’

In [87]: “Hello, {0}”.format(name)

Out[87]: ‘Hello, Reuven’

Notice that str.format returns a new string; it doesn’t technically have anything to do with “print”, although they are often used together. You can assign the resulting string to a new variable, write it to a file, or (of course) print it to the screen.

str.format looks inside of the string, searching for curly braces with a number inside of them. It then grabs the argument with that index, and interpolates it into the resulting string. For example:

In [88]: 'First is {0}, then is {1}, finally is {2}'.format(10, 20, 30)

Out[88]: 'First is 10, then is 20, finally is 30'

You can, of course, mix things up:

In [89]: 'First is {0}, finally is {2}, then is {1}'.format(10, 20, 30)

Out[89]: 'First is 10, finally is 30, then is 20'

You can also repeat values:

In [90]: 'First is {0}. Really, first is {0}. Then is {1}'.format(10, 20, 30)

Out[90]: 'First is 10. Really, first is 10. Then is 20'

If you’ll be using each argument once and in order, you can even remove the numbers — although I’ve been told that this makes the code hard to read. And besides, it means you cannot repeat values, which is sometimes annoying:

In [91]: 'First is {}, then is {}, finally is {}'.format(10, 20, 30)

Out[91]: 'First is 10, then is 20, finally is 30'

You cannot switch from automatic to manual numbering in curly braces (or back):

In [92]: 'First is {0}, then is {}, finally is {}'.format(10, 20, 30)

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

<ipython-input-92-f00f3adf93eb> in <module>()

----> 1 'First is {0}, then is {}, finally is {}'.format(10, 20, 30)

ValueError: cannot switch from manual field specification to automatic field numbering

str.format also lets you use names instead of values, by passing keyword arguments (i.e., name-value pairs in the format of key=value):

In [93]: 'First is {x}, then is {y}, finally is {z}'.format(x=10, y=20, z=30)

Out[93]: 'First is 10, then is 20, finally is 30'

You can mix positional and keyword arguments, but I beg that you not do that:

In [94]: 'First is {0}, then is {y}, finally is {z}'.format(10, y=20, z=30)

Out[94]: 'First is 10, then is 20, finally is 30'

f-strings

As of Python 3.6, we have an even more modern way to perform string interpolation, using “f-strings”. Putting “f” before the opening quotes allows us to use curly braces to interpolate just about any Python expression we want — from variable names to operations to function/method calls — inside of a string:

In [99]: name = 'Reuven'

In [100]: f"Hello, {name}"

Out[100]: 'Hello, Reuven'

In [101]: f"Hello, {name.upper()}"

Out[101]: 'Hello, REUVEN'

In [102]: f"Hello, {name.split('e')}"

Out[102]: "Hello, ['R', 'uv', 'n']"

I love f-strings, and have started to use them in all of my code. bash, Perl, Ruby, and PHP have had this capability for years; I’m delighted to (finally) have it in Python, too!

from __future__ import braces

Do you sometimes wish that you could use curly braces instead of indentation in Python? Yeah, you’re not alone. Fortunately, the __future__ module is Python’s way of letting you try new features before they’re completely baked into your current Python version. For example, if you’re still using Python 2.7 (and I hope you’re not), you can say

from __future__ import division

and division will always return a float, rather than an integer, even if the two operands are integers.

So go ahead, try:

from __future__ import braces

(Yes, this is part of Python, albeit a silly part. And no, don’t expect to be able to use curly braces instead of indentation any time soon.)

I’m a Unix guy, but the participants in my Python classes overwhelmingly use Windows. Inevitably, when we get to talking about working with files in Python, someone will want to open a file using the complete path to the file. And they’ll end up writing something like this:

I’m a Unix guy, but the participants in my Python classes overwhelmingly use Windows. Inevitably, when we get to talking about working with files in Python, someone will want to open a file using the complete path to the file. And they’ll end up writing something like this: